Image Features Influence Reaction Time:

A Learned Probabilistic Perceptual Model for Saccade Latency

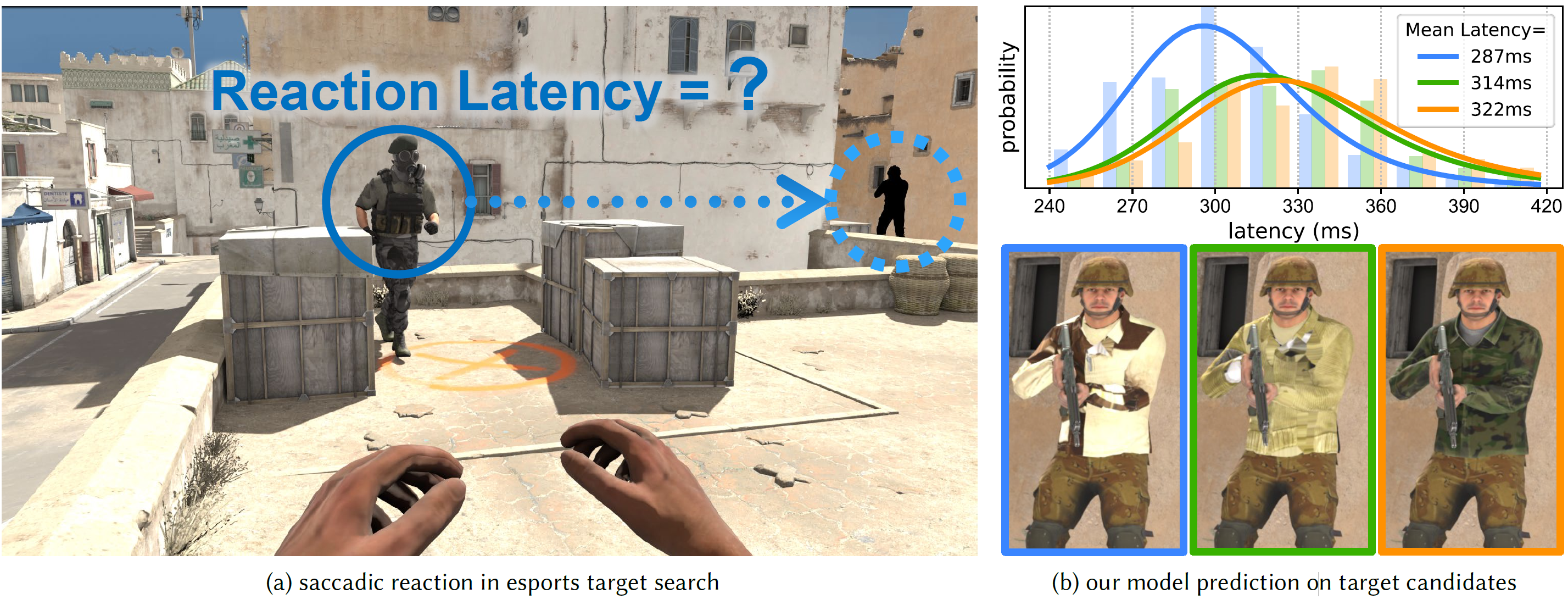

We propose a model which predicts the reaction latency for users to identify and saccade to a peripheral target. Based on our psychophysical data collected for stimuli with varying visual characteristics, we model the likelihood distribution of the time users take to process, react, and saccade to a target. If replacing the black placeholder in (a) with the three target candidates shown in (b), the resulting retinal images exhibit identical perceptual similarity in terms of visual acuity, with all FovVideoVDP scores >9.5 per [Mantiuk et al. 2021]. However, they may trigger significantly faster (leftmost of (b)) or slower (rightmost of (b)) reaction latencies with up to about 35ms difference, significantly affecting task performance. 3D asset credits to Valve Corporation, and Slavyer at Sketchfab Inc.

Abstract

We aim to ask and answer an essential question "how quickly do we react after observing a displayed visual target?" To this end, we present psychophysical studies that characterize the remarkable disconnect between human saccadic behaviors and spatial visual acuity. Building on the results of our studies, we develop a perceptual model to predict temporal gaze behavior, particularly saccadic latency, as a function of the statistics of a displayed image. Specifically, we implement a neurologically-inspired probabilistic model that mimics the accumulation of confidence that leads to a perceptual decision. We validate our model with a series of objective measurements and user studies using an eye-tracked VR display. The results demonstrate that our model prediction is in statistical alignment with real-world human behavior. Further, we establish that many sub-threshold image modifications commonly introduced in graphics pipelines may significantly alter human reaction timing, even if the differences are visually undetectable. Finally, we show that our model can serve as a metric to predict and alter reaction latency of users in interactive computer graphics applications, thus may improve gaze-contingent rendering, design of virtual experiences, and player performance in e-sports. We illustrate this with two examples: estimating competition fairness in a video game with two different team colors, and tuning display viewing distance to minimize player reaction time.

Introduction Video

Citation

Budmonde Duinkharjav, Praneeth Chakravarthula, Rachel Brown, Anjul Patney, Qi Sun

Image Features Influence Reaction Time: A Learned Probabilistic Perceptual Model for Saccade Latency.

ACM Transactions on Graphics 41(4) (Proceedings of ACM SIGGRAPH 2022)

BibTeX

Downloads

Paper

Supplementary Material

Code

Data Coming soon!

Coverage

- ACM Press Release

- NVIDIA Technical Blog Post

- SIGGRAPH 2022 Best Paper Award Blog Post

- arXiv Page

- NVIDIA Project Page

- NYU Project Page

Acknowledgements

The authors would like to thank Chris Wyman for his valuable suggestions. The research is partially supported by the NVIDIA Applied Research Accelerator Program and the DARPA PTG program. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of DARPA.